The SNS Fanout Pattern is a cloud architecture used when a message pushed to an SNS endpoint is replicated and distributed to multiple endpoints like SQS queues, Kinesis Data Firehose, Lambdas, or HTTPS endpoints.

We will explain this technique with a practical scenario.

Let’s assume we have an application that processes large files like video or data files – the file type is unimportant. The idea is that file processing is not instantaneous and could take minutes or even hours.

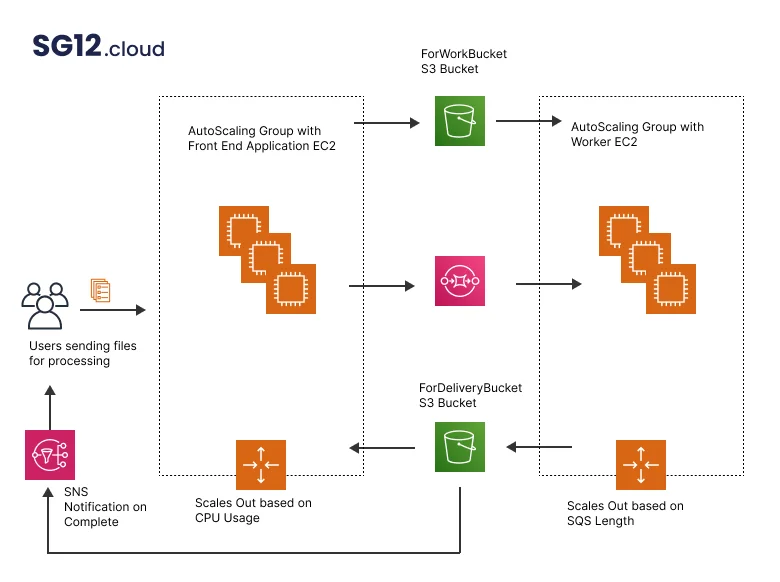

So, for this application, we have the following diagram.

We have two auto-scaling groups – one will host the web application front-end machines, while the other will have the worker machines (the ones that will do the file processing ).

The web application group will scale based on the CPU load of the instances. It will add more EC2 instances if there is a spike in traffic/requests and will scale down when the system load decreases.

The “worker” group will also autoscale, but this time they will not be based on CPU but the length of the SQS queue (the no of messages in the queue)

An application user will upload/send a file for processing via the front-end application. The app will take the file and save it in an S3 bucket called “ForWorkBucket”. At the same time, it will add an entry to the SQS queue.

Instances in the working group will pool the queue and receive messages. These messages will link to the file that needs processing and stored in “ForWorkBucket”.

If the number of messages in the queue increases, the autoscaling groups will add additional ec2 instances to handle all the new requests.

After they receive the message, the EC2 instances will download the file, process it, and upload it to a second bucket – “ForDeliveryBucket”. A new Lambda function will be triggered when the file reaches this bucket. This function will notify the application’s front end that worker machines have completed the workload and the files are ready.

If the processing succeeds, the application will remove the original message from the queue. If not, the message will not be delivered and will reappear after the SQS visibility timeout expires. The worker instances will be able to redo the whole process again.

If all the files are processed and no more jobs are in the queue, the worker auto group will scale to 0.

This example is a classic worker pool elastic architecture that uses an SQS queue. The effect of the SQS is to decouple the different application components and allow them to scale independently.

This diagram is a simplified architecture for job processing. But what happens if multiple outputs of the processed files are needed?

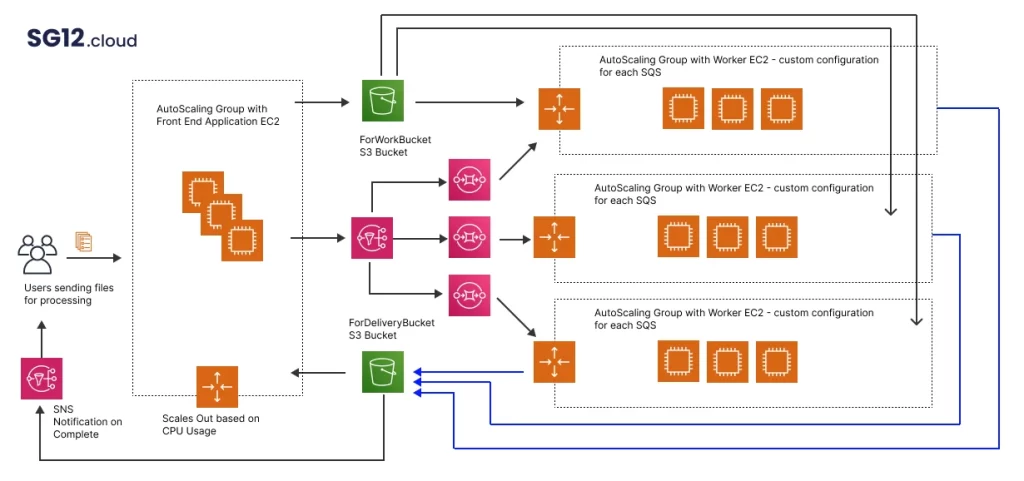

In this case, the SNS and SQS Fanout patterns appear, and the architecture diagram changes in something like this.

This architecture works because when the master file is uploaded, a message is sent to an SNS topic instead of an SQS queue.

This SNS topic has several subscribers. In this case, for each different output file type, we have an SQS queue (but we can have other services as well). So if we have x desired outputs, we have x SQS independent queues. And each of these queues will have its separate autoscaling groups configured to process the master file.

The architect may configure each autoscaling group differently. For example, one of these groups may need to run a heavy CPU algorithm. We will use instance types more suited for the job in that case.

And just like in the single output file case, the EC2 instances will do the work and upload the results in the second bucket. The upload will trigger a Lambda for each file, and this function will have the code that notifies the front-end app that the processing job is done.

Other SNS fanout use cases

Fanout to the testing environment

You can also use this pattern when you replicate data sent from a production environment to a testing one. You will end up with an SQS for testing and one for a production environment.

Fanout to HTTP/S endpoints

It is the same as when you are using SQS, but this time you are using HTTP/s endpoints. SNS will send an HTTP POST delivery of the content of the messages to the endpoints.

Fanout to Lambda

There is also the option to fan out the SNS to a Lambda function. The Lambda function will receive the message payload as input parameters, manipulate that data and then publish the new message to other services. We have one Lambda function subscribed to an SNS topic in this case.

Fanout to Kinesis Data Firehose

In this case, messages published to the SNS topic are sent to Kinesis Data Firehose and delivered to the destination configured. You can subscribe to up to 5 Kinesis Data Firehouse delivery streams to an SNS topic and spread those messages to S3, AWS Redshift, Open search, or 3rd party providers.

In conclusion: This architecture pattern is most common when there is a requirement to spawn multiple work jobs based on a single event. Therefore, consider this pattern when you are designing such an application.